AI Infrastructure Explained: The Essential Guide to Hardware, Networks, and Systems Powering Modern Machine Learning

ExplainersSaturday, 16 May 2026 at 08:00

AI infrastructure is the physical and digital foundation that makes modern artificial intelligence possible. It includes specialized computer chips like GPUs, massive data centers, high-speed networks, energy systems, and cloud platforms that work together to train and run AI models. Without this infrastructure, tools like ChatGPT and other AI systems could not exist.

Building and running AI requires far more than just software code. Companies spend billions of dollars on semiconductors, electricity, cooling systems, and data center facilities. The global race to develop AI has created intense competition for computing power, chips, and energy resources. Nations now view AI infrastructure as critical to their economic and security interests.

This infrastructure connects many industries and technologies. Chip manufacturers produce specialized processors. Power companies supply electricity to data centers that can use as much energy as small cities. Cloud platforms rent out computing resources to businesses that cannot afford their own systems. Understanding how these pieces fit together reveals why AI has become one of the most important technology and policy issues of our time.

Key Takeaways

- AI infrastructure combines hardware like GPUs, data centers, networking equipment, and energy systems that power AI models

- Training and running AI requires massive computing resources, creating global competition for chips, electricity, and data center capacity

- The development of AI infrastructure has become a strategic priority for nations due to its economic and security implications

Defining Modern AI Systems

Modern AI systems represent a fundamental shift in computing architecture, built to handle workloads that traditional IT infrastructure cannot support. These systems operate at scales measured in petaflops and exaflops, processing datasets that span terabytes to petabytes while training models with billions or trillions of parameters.

Core AI Workload Categories:

- Machine learning systems that identify patterns in structured and unstructured data

- Deep learning networks with multiple neural layers requiring intensive matrix operations

- Generative AI models that create new content from learned distributions

- Large language models (LLMs) that process and generate human language at scale

- Transformers that use attention mechanisms for parallel processing of sequential data

The computational demands vary significantly across these categories. Training a frontier LLM requires thousands of specialized processors running continuously for weeks or months. These workloads consume megawatts of power and generate massive amounts of heat that data centers must dissipate.

Modern AI systems depend on hardware designed specifically for tensor operations. Graphics processing units handle parallel computations that central processing units cannot efficiently execute. Tensor processing units offer further optimization for specific AI operations.

The shift toward transformer architectures changed infrastructure requirements dramatically. These models demand high-bandwidth memory, fast interconnects between processors, and storage systems that can feed training data at rates exceeding hundreds of gigabytes per second. Data center operators now design facilities around these specifications rather than adapting general-purpose infrastructure.

Energy consumption has emerged as a critical constraint. Training runs for large models can cost millions in electricity alone, making power availability and efficiency central concerns for AI deployment.

Strategic Importance of Underlying Technology

AI infrastructure has shifted from a backend utility to a core strategic asset. Organizations that control compute resources, data center capacity, and semiconductor supply chains now hold significant competitive advantages in deploying advanced AI systems.

The concept of the AI factory has emerged as a framework for understanding this shift. These facilities combine dense GPU clusters, high-speed networking, and specialized cooling systems to process training workloads at scale. Companies investing in this infrastructure gain direct control over model development timelines and deployment costs.

Scalability determines which organizations can progress from prototype to production. Infrastructure limitations create bottlenecks in three critical areas:

- Compute capacity: Access to sufficient GPU resources for training and inference

- Power delivery: Data centers require 50-100 megawatts for large-scale AI operations

- Network throughput: High-bandwidth connections between compute nodes prevent training delays

Geopolitical dynamics now shape infrastructure decisions. Semiconductor manufacturing, cloud capacity, and energy supply have become matters of national interest. Governments treat AI infrastructure similarly to electricity grids or telecommunications networks.

The infrastructure gap separates organizations into distinct categories. Those with proprietary compute capacity can iterate rapidly on model architectures. Those relying on shared cloud resources face allocation constraints and cost unpredictability. This divide affects research velocity, model performance, and time to market.

Financial markets reflect this reality. Capital flows increasingly target companies controlling physical infrastructure rather than application-layer services. Infrastructure ownership provides durable advantages that software alone cannot replicate.

Read also

Main Elements Supporting AI Models

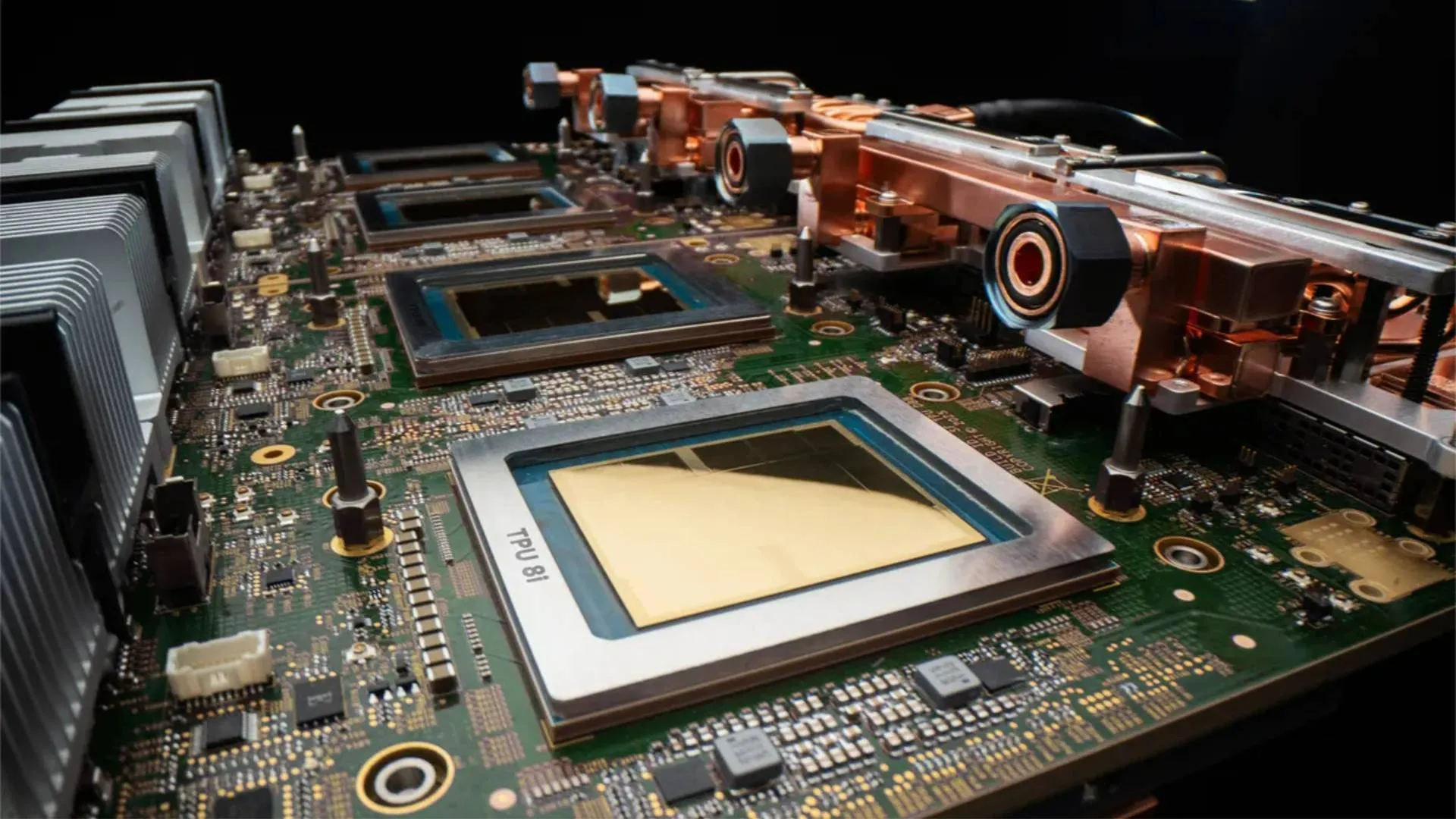

AI models depend on a technology stack that spans hardware, software, and data systems working in coordination. The compute layer forms the foundation, consisting of specialized processors like GPUs and TPUs that handle the intensive mathematical operations required for training and inference.

Core Infrastructure Components:

- Compute resources - GPUs, TPUs, and accelerators that process AI workloads

- Storage systems - High-performance storage for datasets and model checkpoints

- Network architecture - High-bandwidth interconnects between compute nodes

- Data management - Systems for ingestion, validation, and pipeline orchestration

The model layer sits above the hardware and provides the frameworks necessary for development. TensorFlow and PyTorch dominate as machine learning frameworks, offering developers tools to build, train, and deploy models efficiently. These machine learning libraries abstract complex operations into accessible APIs that speed up the AI lifecycle.

Organizations must maintain robust data management practices throughout model development. Raw data flows through preprocessing pipelines, gets transformed into training-ready formats, and feeds into model training workflows. Storage infrastructure must handle petabytes of data while maintaining low-latency access patterns.

The software stack also includes orchestration tools that manage resource allocation across distributed systems. These platforms coordinate GPU clusters, schedule training jobs, and optimize utilization rates across AI infrastructure. Teams rely on monitoring systems to track performance metrics, identify bottlenecks, and maintain operational efficiency during both training and production inference phases.

GPUs and Specialized AI Compute

Graphics Processing Units remain the dominant hardware for AI model training and inference across hyperscale data centers. Unlike CPUs that handle sequential tasks, GPUs process thousands of operations simultaneously through parallel architecture. This design makes them essential for matrix calculations that power neural networks.

NVIDIA controls roughly 80-95% of the data center GPU market. Cloud providers provision GPU instances through virtualized access, allowing organizations to rent compute capacity by the hour. A single high-end GPU can cost $30,000 to $40,000, while full training clusters for frontier models require tens of thousands of units.

Key AI Compute Hardware:

| Hardware Type | Optimized For | Primary Users |

| GPUs (Graphics Processing Units) | Parallel processing, flexible workloads | Most AI companies, research labs |

| TPUs (Tensor Processing Units) | Matrix operations, Google's frameworks | Google, select cloud customers |

| Custom ASICs | Specific neural network architectures | Meta, Amazon, large tech firms |

| Aspect | Training | Inference |

| Frequency | Once per model version | Continuous, millions of queries |

| Compute Pattern | Batch processing, weeks to months | Real-time, milliseconds per request |

| Hardware Priority | Maximum throughput | Low latency, energy efficiency |

| Cost Structure | Large upfront capital | Ongoing operational expense |

Tensor Processing Units represent Google's proprietary answer to GPU dependence. These chips optimize specifically for TensorFlow operations and tensor computations. TPUs deliver better performance per watt for certain workloads but lack the ecosystem flexibility of GPUs.

The economics of AI compute centers on utilization rates and power efficiency. Training runs must maximize GPU uptime since idle hardware generates no value while consuming significant electricity. Data centers now design cooling systems, power delivery, and network topology around dense GPU deployments that can draw 400-700 watts per chip.

Specialized accelerators continue emerging from hyperscalers seeking cost advantages and supply chain independence. Most organizations still rely on GPU instances from AWS, Azure, or Google Cloud rather than building custom silicon.

Read also

Operational Workflow of AI Data Centers

AI data centers operate through a series of coordinated stages that move data from ingestion to model deployment. The workflow begins with data ingestion, where massive datasets arrive from multiple sources and enter high-throughput storage systems. These facilities process petabytes of training data daily, requiring specialized data pipelines that can handle the volume and velocity demands of modern AI workloads.

Data preprocessing transforms raw inputs into formats suitable for model training. This stage involves cleaning, normalization, and feature extraction using distributed data processing frameworks that span hundreds of GPU nodes. The infrastructure must maintain low latency between storage and compute resources to prevent bottlenecks during these operations.

MLOps platforms orchestrate the entire AI pipeline, managing version control, experiment tracking, and model deployment. These systems integrate with DevOps workflows to automate infrastructure provisioning and scaling. Most modern AI data centers use Kubernetes-native architectures that enable dynamic resource allocation based on workload demands.

The workflow typically follows this sequence:

- Raw data arrives and enters storage systems

- Preprocessing nodes clean and format datasets

- Training clusters execute model development

- Validation systems test model performance

- Deployment pipelines push models to production

- Monitoring systems track inference operations

Automation plays a critical role throughout these stages. The infrastructure continuously adjusts compute allocation, manages GPU scheduling, and handles failover scenarios without manual intervention. This operational model supports the rapid iteration cycles required for AI development while maintaining the reliability standards expected of enterprise-grade infrastructure.

Networking and High-Speed Interconnects for AI

AI workloads demand fundamentally different networking infrastructure than traditional enterprise applications. The primary challenge centers on scaling bandwidth and connectivity while maintaining low latency across thousands of GPU accelerators working in parallel.

Modern AI data centers rely on specialized networking technologies to handle massive data movement between compute nodes. InfiniBand emerged as an early solution for high-performance computing, offering low-latency communication through Remote Direct Memory Access (RDMA). This technology allows GPUs to access memory across the network without involving the CPU, reducing overhead and improving training efficiency.

Key networking requirements for AI infrastructure:

- Ultra-low latency for gradient synchronization during distributed training

- High bandwidth capacity (400G to 800G per port becoming standard)

- Non-blocking network topologies to prevent communication bottlenecks

- RDMA support for direct memory access between accelerators

Ethernet alternatives are gaining traction through initiatives like the Ultra Ethernet Consortium, which aims to provide standardized high-speed connectivity for AI workloads. Meanwhile, UALink focuses on accelerator-to-accelerator communication, addressing the growing need for efficient interconnects between GPUs within the same system.

Optical interconnects increasingly replace copper connections for longer distances within data centers. Co-Packaged Optics (CPO) technology bridges short-reach and long-haul solutions, delivering efficient data transmission for AI scale-out networks.

The shift toward Composable Disaggregated Infrastructure using CXL (Compute Express Link) represents another evolution. This interconnect standard enables CPUs and accelerators to share memory pools directly at the hardware level, improving resource utilization across distributed systems.

The Role of Cloud Platforms in AI Development

Cloud platforms provide the compute foundation that makes modern AI development economically viable. Training large language models requires thousands of GPUs running in parallel for weeks or months. Building this infrastructure independently costs hundreds of millions of dollars, putting it out of reach for most organizations.

AWS, Microsoft Azure, and Google Cloud Platform dominate the AI infrastructure market. These hyperscalers maintain GPU clusters with NVIDIA A100 and H100 accelerators distributed across global data centers. They handle the networking fabric, power systems, and cooling requirements that GPU workloads demand.

Key Infrastructure Components:

- GPU instances (A100, H100, V100)

- High-bandwidth networking for distributed training

- Object storage for training datasets

- Container orchestration for model deployment

AWS offers the broadest selection of instance types and the most mature tooling ecosystem. Microsoft Azure provides tight integration with enterprise systems and competitive GPU availability. Both platforms let developers access specialized hardware without capital expenditure.

Spot instances change the economics of AI training. These unused compute resources sell at discounts of 60-90% compared to on-demand pricing. Organizations that design fault-tolerant training pipelines can reduce infrastructure costs significantly. The tradeoff is potential interruption when cloud providers need capacity back.

Cloud platforms also solve the semiconductor supply chain problem. Individual companies struggle to secure GPU allocations during shortages. Hyperscalers negotiate directly with NVIDIA and other chip manufacturers, then redistribute capacity through their platforms. This gives smaller AI teams access to hardware they cannot purchase directly.

Read also

Storage Solutions for Intensive AI Tasks

AI workloads demand storage infrastructure that can handle petabyte-scale datasets while maintaining high throughput for training and inference operations. Traditional enterprise storage systems often fail to meet these requirements due to bandwidth limitations and access pattern constraints inherent to machine learning workflows.

Distributed file systems form the backbone of modern AI storage architectures. These systems enable parallel data access across compute clusters, allowing hundreds of GPUs to read training data simultaneously without creating bottlenecks. Facilities operated by hyperscalers typically deploy custom distributed storage layers optimized for sequential reads during model training phases.

Data lakes serve as centralized repositories for raw, unstructured datasets used in AI development. Organizations maintain these lakes in object storage systems that provide cost-effective scaling to multiple petabytes. The architecture separates compute from storage, enabling data science teams to process datasets without duplicating information across environments.

Key storage requirements for AI infrastructure:

- Throughput: 100+ GB/s read speeds for large-scale training

- Capacity: Petabyte-scale storage for dataset repositories

- Latency: Sub-millisecond access for inference workloads

- Scalability: Linear performance scaling as datasets grow

Data warehouses complement data lakes by providing structured access to processed datasets and model outputs. These systems enable analytics teams to evaluate model performance and track training metrics across experiments.

The storage layer must support both high-bandwidth sequential access during training and low-latency random access during inference. Organizations deploy tiered storage architectures that place frequently accessed data on NVMe arrays while archiving historical datasets to lower-cost object storage systems.

Energy Demands of Advanced AI Systems

AI data centers have become one of the fastest-growing electricity consumers globally. The computational requirements for training and running large language models and other AI systems require massive amounts of power that strain existing grid infrastructure.

Key Power Consumption Stages

The energy demands of AI systems vary significantly across different operational phases:

- Model Training: Requires the highest power draw, often using thousands of GPUs running continuously for weeks or months

- Fine-tuning: Uses moderate energy as models adapt to specific tasks

- Inference: Consumes steady power for serving predictions and responses to user queries

Graphics processing units form the backbone of AI compute infrastructure. These specialized chips draw substantially more power than traditional CPUs. A single high-performance GPU can consume 400-700 watts under full load, and data centers deploy them in clusters of thousands.

Projected Growth in Electricity Demand

Industry analysts expect AI infrastructure to require 75-100 gigawatts of new generating capacity by the early 2030s. This translates to roughly 1,000 terawatt-hours of additional annual electricity consumption for digital infrastructure.

The physical infrastructure supporting AI extends beyond compute chips. Cooling systems account for a substantial portion of data center energy use, as dense GPU clusters generate intense heat. Power distribution systems, networking equipment, and storage arrays add further to total consumption.

Data center operators face growing pressure to align energy demand with clean power generation. The temporal and spatial variability of AI workloads complicates integration with renewable energy sources. Some facilities now locate near abundant power supplies, whether from natural gas plants or hydroelectric dams.

Thermal Management and Cooling Innovations

AI workloads generate heat at levels that exceed the capacity of traditional air cooling systems. Modern GPU clusters can produce over 100 kW per rack, compared to 5-10 kW in conventional data centers.

Data centers currently consume approximately 415 TWh of electricity annually. This represents about 1.5% of global energy demand. Projections suggest this figure could reach 945 TWh by 2030 as AI infrastructure scales.

Cooling Methods for High-Density Computing:

- Air Cooling - Traditional approach using HVAC systems; struggles above 30 kW per rack

- Direct-to-Chip Liquid Cooling - Delivers coolant directly to heat-generating components

- Immersion Cooling - Submerges servers in dielectric fluid

- Hybrid Systems - Combines air and liquid cooling for different rack densities

Liquid cooling solutions have become necessary rather than optional for AI infrastructure operators. These systems can handle thermal loads that would be impossible to manage with air alone. They also reduce the energy overhead associated with cooling, which can account for up to half of total data center power consumption.

The shift to liquid cooling requires substantial capital investment. Legacy facilities often need significant structural modifications to accommodate new thermal management systems. Cold plates, distribution units, and plumbing infrastructure must be installed throughout the facility.

Thermal management efficiency directly impacts data center economics. Better cooling allows higher compute density per square foot. It also reduces the total cost of ownership by lowering energy consumption and extending hardware lifespan.

Global Expansion of Hyperscale Data Centers

Hyperscale data center capacity is expanding at an unprecedented rate globally. Total capacity is expected to nearly double from 103 GW to 200 GW by 2030, driven primarily by AI workload requirements and cloud infrastructure growth.

Active hyperscaler IT load worldwide will rise from 24.37 GW in 2025 to 147.13 GW by 2035. This represents a more than sixfold increase in just one decade. The expansion reflects a fundamental shift in infrastructure priorities, where power density and cooling capacity are scaling faster than physical site counts.

Key Growth Drivers:

- AI training and inference workloads

- Cloud service expansion

- Geographic distribution requirements

- Enterprise digital transformation

Hyperscalers are expected to control approximately 70 percent of forecast capacity in major markets through owned or leased facilities. Their infrastructure decisions are shaping the broader data center ecosystem and supply chain dynamics.

The buildout is entering a new phase characterized by disciplined, power-aware growth rather than raw expansion. Construction spending in the United States alone has tripled over the last three years. AI workloads are projected to represent half of all data center capacity by 2030.

Total industry investment is approaching a $3 trillion supercycle. This capital deployment encompasses not just facility construction but also power infrastructure, cooling systems, and networking equipment necessary to support next-generation compute requirements.

The expansion faces significant constraints around energy availability and grid capacity. Hyperscalers are increasingly focusing on strategic locations with access to reliable power sources and favorable regulatory environments.

Semiconductor Supply Chain and Chip Manufacturing

The semiconductor supply chain represents one of the most complex industrial networks in modern manufacturing. A single advanced chip requires hundreds of steps across multiple countries, involving specialized equipment manufacturers, material suppliers, fabrication facilities, and assembly operations.

TSMC dominates advanced node production, controlling roughly 70% of cutting-edge chip manufacturing. This concentration creates significant vulnerability as demand for AI chips continues to surge. The company operates massive fabrication facilities that cost upwards of $20 billion each and require years to build.

The supply chain faces three primary constraints:

- Material shortages in specialized chemicals and rare earth elements

- Equipment bottlenecks from limited suppliers of extreme ultraviolet lithography tools

- Geopolitical tensions affecting trade routes and export controls

Upstream suppliers provide critical components that determine production capacity. Companies like ASML manufacture lithography equipment that costs over $150 million per unit, with lead times extending beyond 18 months. These tools pattern circuits on silicon wafers with features measured in nanometers.

The downstream assembly process adds further complexity. Advanced packaging techniques for chiplets and high-bandwidth memory require specialized facilities separate from fabrication plants. Each step must maintain near-perfect precision, as a single defect can render an entire wafer worthless.

Vertical integration has emerged as one response to supply chain fragility. Some firms now control design, manufacturing, and assembly under unified operations to reduce dependencies. Others pursue strategic partnerships across the supply network to secure capacity and materials access.

Impacts on National Security

AI infrastructure has become a critical component of national defense strategy. The United States and other nations are investing billions of dollars in secure data centers, specialized compute clusters, and hardened networking systems to maintain technological superiority.

Key Infrastructure Vulnerabilities

Modern AI systems depend on massive GPU clusters and data centers that present attractive targets for adversaries. These facilities require robust physical security, network segmentation, and real-time threat monitoring. State-sponsored actors increasingly target semiconductor supply chains, cloud infrastructure providers, and research networks to steal AI models or disrupt training operations.

Security Requirements for Defense Applications

Military and intelligence agencies implementing AI capabilities must address several technical challenges:

- Encryption protocols for data at rest and in transit across distributed training clusters

- Access controls that segment classified workloads from commercial cloud infrastructure

- Data privacy frameworks that protect sensitive operational information during model development

- Air-gapped systems for the most sensitive national security applications

The Department of Defense faces particular challenges integrating AI into operational technology environments where safety and reliability requirements exceed commercial standards.

Geopolitical Infrastructure Competition

Nations recognize that domestic compute capacity determines AI leadership. China's investments in indigenous GPU development and data center construction aim to reduce dependence on Western semiconductor suppliers. Export controls on advanced chips like the H100 and future generations create strategic bottlenecks that reshape global AI infrastructure deployment.

The concentration of hyperscale computing resources among a handful of cloud providers creates single points of failure. Government agencies must balance the efficiency of commercial infrastructure against sovereignty concerns and supply chain risks.

Geopolitical Challenges and Sovereign Technologies

AI infrastructure has become a strategic concern for nations worldwide. Countries now view compute resources, data centers, and semiconductor supply chains as critical to national security and economic power.

The fragmentation of global AI infrastructure reflects deepening geopolitical tensions. The United States, China, and the European Union pursue distinct approaches to data governance and technology development. This has led to what analysts call "technological decoupling."

Key infrastructure concerns include:

- Compute access: Control over GPU manufacturing and distribution

- Data residency: Where training data and models are stored and processed

- Energy requirements: Substantial power needs for AI development and deployment

- Supply chain dependencies: Semiconductor fabrication and advanced chip design

Sovereign AI initiatives require nations to maintain infrastructure within their borders. This includes AI accelerators, data centers, and the frameworks needed to develop large language models. Many governments now mandate that certain AI workloads remain under domestic control.

Major cloud providers have responded to these demands. AWS launched the European Sovereign Cloud to address regional data governance requirements. This infrastructure splits the global cloud computing layer into geographically distinct systems.

The trade-offs are significant. Building domestic AI infrastructure requires massive capital investment in facilities, energy systems, and specialized hardware. Nations must balance technological independence against the efficiency gains from shared global infrastructure.

Regulatory frameworks continue to evolve across jurisdictions. Each region establishes different standards for AI development, deployment, and oversight based on local legal and cultural contexts.

Contrasting AI Training with Inference Processes

Model training and inference represent distinct phases of the machine learning lifecycle, each demanding different infrastructure approaches. Training builds a model by adjusting billions of parameters against large datasets. This process runs once per model version and requires massive parallel compute capacity.

Inference applies the trained model to new inputs in production environments. Every chatbot query or automated decision constitutes an inference event. Unlike training's one-time compute spike, inference runs continuously across thousands of simultaneous requests.

Key Operational Differences:

| Hardware Type | Optimized For | Primary Users |

| GPUs (Graphics Processing Units) | Parallel processing, flexible workloads | Most AI companies, research labs |

| TPUs (Tensor Processing Units) | Matrix operations, Google's frameworks | Google, select cloud customers |

| Custom ASICs | Specific neural network architectures | Meta, Amazon, large tech firms |

| Aspect | Training | Inference |

| Frequency | Once per model version | Continuous, millions of queries |

| Compute Pattern | Batch processing, weeks to months | Real-time, milliseconds per request |

| Hardware Priority | Maximum throughput | Low latency, energy efficiency |

| Cost Structure | Large upfront capital | Ongoing operational expense |

Training a frontier model like GPT-4 consumed approximately $78 million in compute resources. A single training run can pull tens of megawatts over extended periods. Data center clusters for training prioritize high-bandwidth GPU interconnects and massive memory capacity.

AI inference will account for 65% of AI compute demand by 2029 and represents 80-90% of lifetime AI costs. Inference workloads favor different silicon architectures, often running on lower-precision chips optimized for throughput rather than training's need for precision.

The two phases stress hardware differently and require separate optimization strategies. Training happens in centralized facilities with dense GPU clusters. Inference increasingly distributes across edge locations to reduce latency and bandwidth costs.

Emerging Trends in AI Infrastructure

AI infrastructure is shifting from centralized cloud facilities to distributed compute architectures. Edge computing has gained traction as organizations push inference workloads closer to data sources, reducing latency for real-time applications. This trend reflects growing demand for processing power at the network periphery rather than exclusively in hyperscale data centers.

Retrieval-Augmented Generation (RAG) architectures are reshaping how organizations deploy large language models. RAG systems reduce computational requirements by combining smaller models with external knowledge bases, offering a more efficient alternative to training massive foundation models from scratch.

Specialized inference engines like vLLM are optimizing model serving infrastructure. These tools improve GPU utilization and throughput for production deployments, addressing the economic challenges of running large models at scale. Hyperscalers and enterprises are adopting these frameworks to lower operational costs while maintaining performance.

Key Infrastructure Developments:

- GPU supply constraints driving investments in custom silicon and alternative accelerators

- Data center power requirements reaching 50-100+ megawatts per facility

- High-bandwidth networking becoming critical for distributed training clusters

- Liquid cooling systems replacing air cooling for dense GPU deployments

Agentic AI systems are creating new infrastructure demands. These autonomous frameworks require persistent compute resources, real-time data pipelines, and orchestration layers that differ from traditional inference workloads. The architecture must support continuous decision-making loops rather than single-request responses.

Geopolitical factors are influencing infrastructure location decisions. Export controls on advanced semiconductors and data sovereignty requirements are pushing organizations to build regional compute capacity. This fragmentation complicates global AI deployment strategies for multinational enterprises.

Read also

Conclusion

AI infrastructure represents a capital-intensive technical stack that spans silicon design, power distribution, networking architecture, and software orchestration layers. Organizations deploying these systems face trade-offs between on-premises GPU clusters and cloud-based solutions from hyperscalers like AWS, Google Cloud, and Microsoft Azure.

The hardware requirements differ substantially between training and inference workloads. Training demands high-bandwidth interconnects such as InfiniBand or RDMA-capable Ethernet, while inference prioritizes latency reduction and throughput optimization. Data centers supporting these workloads require specialized cooling systems and power infrastructure that can handle densities exceeding traditional enterprise IT environments.

Key infrastructure components include:

- Compute: GPUs, TPUs, and specialized AI accelerators

- Networking: High-speed interconnects for distributed training

- Storage: Low-latency systems for large dataset access

- Power: Grid capacity and backup systems for continuous operation

- Software: MLOps platforms, model serving frameworks, and monitoring tools

Geopolitical factors increasingly shape infrastructure decisions. Export controls on advanced semiconductors and regional data sovereignty requirements influence where organizations build capacity and source hardware.

The economic model for AI infrastructure continues to evolve. Companies must evaluate build-versus-buy decisions based on workload characteristics, scale requirements, and total cost of ownership across multi-year periods. This calculation includes not just hardware acquisition but ongoing power costs, which can represent 40-50% of operational expenses for large-scale training clusters.

Frequently Asked Questions

AI infrastructure requires specialized hardware configurations, networking topologies, and operational frameworks that differ substantially from traditional enterprise IT systems. Organizations building AI capabilities need to understand GPU cluster orchestration, model serving architectures, and the cost structures of compute-intensive workloads.

What are the core components of an AI infrastructure stack?

The hardware layer forms the foundation with GPUs or specialized accelerators like TPUs for training and inference workloads. NVIDIA A100 and H100 chips dominate training clusters, while inference often runs on lower-cost alternatives like T4 or A10 GPUs depending on latency requirements.

Storage systems must handle both high-throughput data ingestion and fast retrieval for model training. Object storage serves training datasets, while high-speed NVMe drives cache frequently accessed data during training runs.

Networking infrastructure connects GPU nodes through high-bandwidth fabrics like InfiniBand or RoCE. These networks achieve 400 Gbps to 3.2 Tbps per node to prevent communication bottlenecks during distributed training.

The software stack includes orchestration platforms like Kubernetes, MLOps tools for model management, and frameworks such as PyTorch or TensorFlow. Monitoring systems track GPU utilization, memory usage, and training metrics across clusters.

How does AI infrastructure differ from traditional IT infrastructure?

Traditional IT infrastructure prioritizes reliability and consistent performance for transactional workloads. Database servers, web applications, and enterprise software run on general-purpose CPUs with predictable resource consumption patterns.

AI infrastructure demands specialized processors optimized for parallel matrix operations. A single GPU node can consume 700 watts compared to 200-300 watts for a typical server, requiring different power and cooling architectures.

The economics differ substantially. Traditional infrastructure follows steady-state capacity planning, while AI training creates burst workloads that consume thousands of GPUs for days or weeks. Organizations must choose between owning expensive GPU clusters with low utilization or paying premium prices for cloud GPU instances.

Network requirements escalate dramatically for distributed training. Traditional systems operate on 10-25 Gbps Ethernet, while AI clusters need 200-800 Gbps low-latency networks to synchronize gradient updates across hundreds of GPUs.

What does a typical AI infrastructure architecture look like for production workloads?

Production architectures separate training and inference infrastructure based on different performance and cost profiles. Training clusters use high-end GPUs in tightly coupled configurations, while inference deployments optimize for throughput and cost per request.

The training environment consists of GPU pods with 8-16 accelerators per node connected through high-speed fabric. These pods connect to distributed file systems or object storage containing petabytes of training data. Organizations run multiple training jobs simultaneously using container orchestration and job schedulers.

Inference architecture scales horizontally with load balancers distributing requests across GPU or CPU instances. Smaller models run on CPU instances for cost efficiency, while large language models require GPU serving with techniques like batching and quantization to improve throughput.

Data platforms feed both systems. Feature stores serve preprocessed data to models, while data pipelines continuously process raw data for retraining. Model registries version and track deployed models across environments.

Which tools and software are most commonly used to build and operate AI infrastructure?

Kubernetes has become the standard orchestration layer with specialized operators like Kubeflow for ML workflows. Ray provides distributed computing capabilities for training and hyperparameter tuning across clusters.

MLflow and Weights & Biases track experiments, log metrics, and manage model versions. These platforms integrate with training code to capture hyperparameters, performance metrics, and model artifacts automatically.

Model serving platforms include NVIDIA Triton for GPU inference, TorchServe for PyTorch models, and TensorFlow Serving. Cloud providers offer managed services like Amazon SageMaker, Google Vertex AI, and Azure Machine Learning that bundle infrastructure and tooling.

Data processing relies on Apache Spark for batch workloads and tools like Apache Kafka or Kinesis for streaming data. Feature stores like Tecton or Feast manage feature engineering pipelines and ensure consistency between training and inference.

Infrastructure as code tools such as Terraform provision GPU instances and configure networking. Monitoring systems like Prometheus collect metrics, while platforms like Grafana visualize GPU utilization, training progress, and infrastructure health.

Read also

How do you design AI infrastructure for scalability, reliability, and cost efficiency?

Scalability starts with cluster sizing based on model architecture and training data volume. Organizations calculate GPU memory requirements, determine nodes needed for distributed training, and design network topology to minimize communication overhead.

Autoscaling policies adjust inference capacity based on request volume. Systems provision additional GPU instances when latency thresholds breach and scale down during low-traffic periods. Batch inference processes large volumes asynchronously using spot instances at 60-80% discounts.

Reliability requires fault tolerance in long-running training jobs. Checkpointing saves model state every few hours so failures only lose recent progress. Multi-region deployments protect inference services, with traffic routing to healthy regions during outages.

Cost optimization balances performance with economics. Training uses spot instances when possible, with automatic job migration during interruptions. Model compression techniques like quantization and pruning reduce inference costs by enabling smaller, faster models.

Reserved instances or savings plans reduce costs for steady-state workloads by 30-50%. Organizations benchmark different instance types to find optimal price-performance ratios for specific models.

What are practical examples of AI infrastructure setups for common use cases?

Computer vision model training for autonomous vehicles requires clusters of 256-1024 H100 GPUs connected through 400 Gbps InfiniBand. These systems process petabytes of sensor data stored in distributed file systems, with training runs lasting days to weeks.

Large language model inference for chatbots deploys across multiple availability zones with GPU instances serving requests. Load balancers distribute traffic, while caching layers store common responses. Organizations use 8-16 A100 or L40 GPUs per serving pod with batch processing to maximize throughput.

Recommendation systems at scale combine real-time feature computation with model inference. Stream processing platforms like Flink generate features from user interactions, feeding them to model servers that return predictions in milliseconds. These systems run on hundreds of CPU instances with selective GPU use for deep learning components.

Financial services fraud detection processes transaction streams through gradient boosted trees on CPU clusters. Models retrain nightly on new data using distributed frameworks, with updated versions deploying automatically through CI/CD pipelines.

Healthcare imaging analysis uses GPU workstations for radiologists with models running locally. Centralized training occurs on cloud GPU clusters, while inference deploys to edge devices in medical facilities with strict data residency requirements.

Popular news

Latest comments

Loading