Google unveils two purpose-built eighth-generation TPUs to power the Agentic AI era

NewsFriday, 15 May 2026 at 16:05

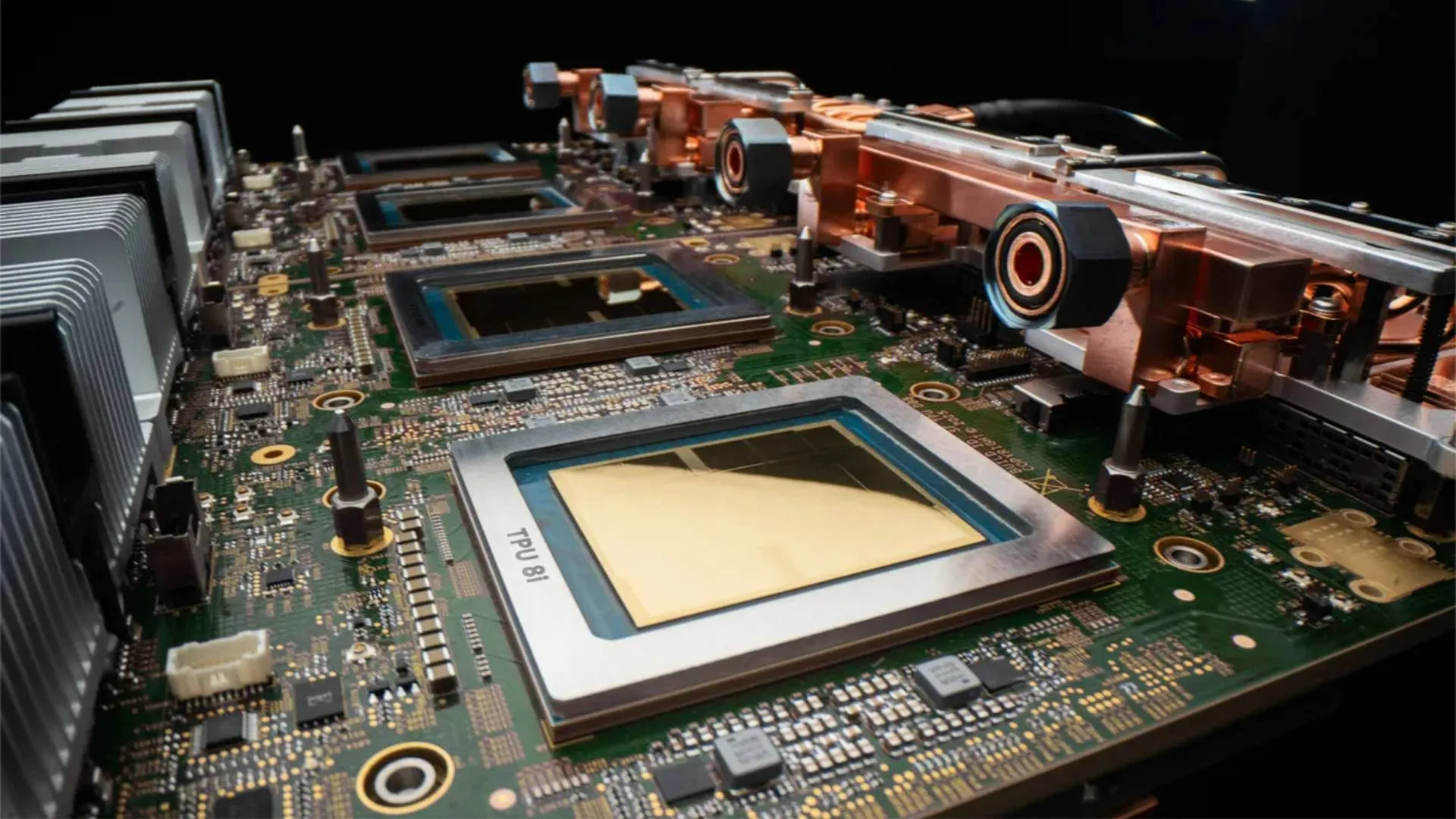

Google has announced its eighth generation of custom Tensor Processing Units (TPUs), introducing two distinct chips - TPU 8t and TPU 8i - engineered specifically for the demands of AI agents. Unveiled at Google Cloud Next, the chips represent the culmination of more than a decade of silicon development and mark a significant shift in how Google is approaching AI infrastructure: not with a single general-purpose chip, but with two purpose-built architectures, one for training and one for inference.

Built for Agentic AI

The growing popularity of AI agents has altered the way AI infrastructure was defined and has fundamentally changed what AI infrastructure must deliver. In this age of AI agents, models must reason through problems, execute multi-step workflows, and learn from their own actions in continuous loops, placing an entirely new set of demands on underlying hardware.

This is a huge departure from earlier AI workloads that were largely static and batch-driven. Agentic AI systems we use now are iterative, collaborative and latency-sensitive. To meet the needs of today's AI infrastructure, Google designed the TPU 8t and TPU 8i in partnership with Google DeepMind. Google says the decision to design two separate chips instead of a single unified architecture came from its decade-long experience of building chips for AI tasks.

"Several years ago, we anticipated rising demand for inference from customers as frontier AI models are deployed in production and at scale," said Amin Vahdat, SVP and Chief Technologist, AI and Infrastructure at Google. "And with the rise of AI agents, we determined the community would benefit from chips individually specialized to the needs of training and serving."

Read also

TPU 8t: Built for the training cycle

The TPU 8t is Google's answer to the growing complexity of frontier model development. It is built to reduce the frontier model development cycle from months to weeks, delivering nearly 3x the compute performance per pod over the previous generation.

A single TPU 8t superpod, Google says, now scales to 9,600 chips and two petabytes of shared high bandwidth memory, with double the interchip bandwidth of the previous generation. This chip delivers 121 ExaFlops of compute and allows the most complex models to leverage a single, massive pool of memory.

Performance alone isn’t the focus because reliability gets its own share of attention too. Google argues that TPU 8t is engineered to target over 97% "goodput," which is a measure of useful, productive compute time, through capabilities including real-time telemetry across tens of thousands of chips, automatic detection and rerouting around faulty links without interrupting a job, and Optical Circuit Switching that reconfigures hardware around failures with no human intervention.

TPU 8i: Designed for low-latency agent interactions

Where TPU 8t powers the building of models, TPU 8i is built for running them. It is designed specifically to handle the intricate, real-time demands of multi-agent workloads. To eliminate latency bottlenecks, Google made several architectural changes including pairing 288GB of high-bandwidth memory with 384MB of on-chip SRAM, which is three times higher than the previous generation.

For a modern mixture of expert models, Google doubled the interconnect bandwidth to 19.2Tb/s, and a new Boardfly architecture reduces the maximum network diameter by more than 50%, ensuring the system works as one cohesive, low-latency unit.

Google claims these design changes result in 80% better performance-per-dollar compared to the previous generation, enabling businesses to serve nearly twice the customer volume at the same cost.

Power efficiency becomes first-class citizen

For AI infrastructure, compute is no longer the biggest bottleneck but energy is. To power data centres, AI hyperscalers are turning to gas power and Google’s 8th gen TPUs address this by making efficiency a system-wide commitment. Google claims TPU 8t and TPU 8i deliver up to two times better performance-per-watt over the previous generation.

Both TPU 8t and TPU 8i are supported by Google's fourth-generation liquid cooling technology, and since Google’s data centers have been co-designed with TPUs, they deliver six times more computing power per unit of electricity than they did just five years ago.

Both chips will be generally available later this year and can be used as part of Google's AI Hypercomputer, which brings together purpose-built hardware, open software, and flexible consumption models into a unified stack. Both platforms support native JAX, PyTorch, SGLang and vLLM, and interested customers can request access ahead of general availability.

The demand for agentic AI has been unprecedented and with TPU 8t and TPU 8i, Google is showcasing that the agentic era demands more than incremental chip improvements, it demands infrastructure rethought from the ground up. The real question is whether these new tensor processing units can put a dent in NVIDIA’s stronghold.

Popular news

Trump brings tech titans to China talks with Xi

Nebius rockets on the stock market after explosive AI growth; Amsterdam rides the wave

Dutch journalists launch The Firewall: platform takes on Big Tech in court and in the press

Dutch investment fund on the hunt for the next ASML in tech startups

AI Infrastructure Explained: The Essential Guide to Hardware, Networks, and Systems Powering Modern Machine Learning

Latest comments

Loading