ChatGPT 'Solved' a 60-Year Erdős Problem? Here’s What Actually Happened

NewsTuesday, 28 April 2026 at 21:52

A viral claim that ChatGPT “solved” a decades-old Erdős problem is spreading quickly. The reality is more restrained. There is no confirmed mathematical breakthrough here, but the episode does highlight a meaningful shift in how AI-generated reasoning is perceived and used.

What actually happened

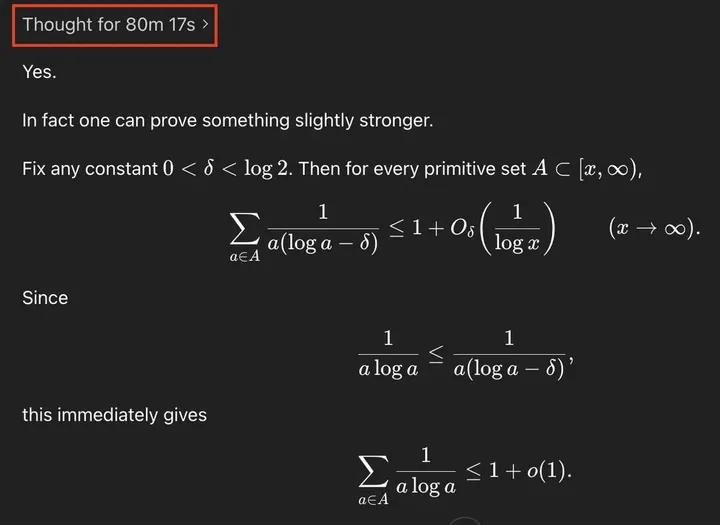

The claim originates from a shared ChatGPT conversation in which the model produces a mathematical argument related to an open problem listed on erdosproblems.com. The output looks structured, uses advanced notation, and appears to extend known results.

That has led some readers to conclude that the problem has been solved.

There is no evidence of that.

Problems of this type require:

- formal write-up in a paper

- verification by multiple experts

- sustained scrutiny in the mathematical community

None of those conditions have been met. Without them, no claim of a “solution” holds.

Where the claim breaks down

A closer look at the generated argument reveals why the conclusion is premature.

The model frames its result as:

- “one can prove something slightly stronger”

- followed by an asymptotic inequality involving big-O notation

This is typical of heuristic or partial arguments, not finalized proofs.

In particular:

- asymptotic bounds do not automatically resolve the full structure of a combinatorial problem

- the argument depends on inequalities that may not hold uniformly across all required cases

- key steps are asserted without full justification

In advanced mathematics, these are not minor details. A single unjustified step invalidates the entire result.

At best, what the model produced is:

- a plausible sketch

- a recombination of known techniques

- potentially a restatement of existing partial results

That is categorically different from solving an open problem.

Why the output looks convincing

The reaction is understandable.

Modern language models can:

- reproduce the structure of formal proofs

- apply known techniques in new combinations

- maintain long chains of symbolic reasoning

For non-specialists, that is indistinguishable from real mathematical progress.

But mathematical validity is not about style. It is about rigor under scrutiny. That gap between appearance and verification is where confusion arises.

What this signals about AI capability

The more important signal is not the incorrect claim, but the type of output that triggered it.

The model demonstrates:

- coherent multi-step reasoning

- familiarity with advanced mathematical tools

- the ability to extend patterns beyond direct memorization

This goes beyond simple text prediction in any practical sense. It shows that AI systems can now generate candidate reasoning paths in technical domains.

However, generation is not understanding, and it is not proof.

The real bottleneck: verification

This episode highlights a structural shift.

AI systems are becoming good at:

- producing plausible proofs

- suggesting strategies

- exploring solution space quickly

But verification remains:

- slow

- expert-dependent

- unforgiving

In mathematics and other high-stakes domains, the limiting factor is no longer idea generation. It is validation.

Why this matters

For executives, researchers, and policymakers, the implication is practical. AI tools will increasingly produce outputs that:

- look correct

- sound authoritative

- require domain expertise to validate

This creates new risks:

- over-trusting fluent outputs

- accelerating errors into decision-making

- misjudging capability based on presentation quality

And new opportunities:

- faster hypothesis generation

- augmented research workflows

- earlier detection of promising directions

The constraint shifts from access to ideas to the ability to filter them.

What to watch next

There are three concrete developments to monitor:

- Whether mathematicians formally analyze the specific ChatGPT-generated argument

- The emergence of tools that assist with proof verification, not just generation

- Hybrid workflows where AI proposes and experts validate at scale

If the argument contains something genuinely new, it will surface through that process. If not, it will quietly collapse under scrutiny, as many plausible proofs do.

Read also

The bottom line

ChatGPT did not solve a 60-year Erdős problem.

But it did produce something that looks close enough to a proof to convince thousands of people that it might have.

That gap between convincing output and verified truth is now one of the most important realities in applied AI.

Popular news

EuropeMedQA Exposes Europe’s Medical AI Weak Spot

talkie: An AI model trained on pre-1931 data

Minecraft study exposes a problem with AI agents

Meta Clashes with China After Buying AI Agent Maker Manus

Taylor Swift files trademarks for her voice and image as AI deepfakes push identity into legal gray zone

Latest comments

Loading